As enterprises move from experimental AI projects to large-scale production systems, the real challenge is no longer model development but operational execution. This is where a well-structured AI Inference Strategy becomes the foundation of scalable intelligence. At scale, even small inefficiencies in inference can multiply into significant performance delays, cost overruns, and system instability. A robust AI Inference Strategy ensures that machine learning models deliver consistent, low-latency predictions while optimizing infrastructure usage across cloud and on-prem environments.

When AI systems scale across millions of users, the importance of a carefully designed AI Inference Strategy increases dramatically. It directly influences how efficiently systems respond to requests, how resources are allocated, and how sustainable AI operations remain over time.

Scaling AI Systems with a Strong AI Inference Strategy

Scaling AI is not just about adding more compute power; it is about designing an intelligent AI Inference Strategy that can handle growing workloads efficiently. At scale, inference becomes the most frequent and resource-intensive part of the AI lifecycle.

A scalable AI Inference Strategy ensures that models can process high volumes of requests without performance degradation. It distributes workloads intelligently across available infrastructure, whether cloud-based or on-prem. Without a structured AI Inference Strategy, systems quickly become overloaded, leading to slow response times and degraded user experience.

Enterprises that prioritize AI Inference Strategy early in their scaling journey are better equipped to handle rapid growth and unpredictable demand patterns.

Performance Challenges in Large Scale AI Inference Strategy

At scale, performance optimization becomes a critical component of AI Inference Strategy. Even minor inefficiencies in model execution can lead to significant latency when multiplied across millions of requests.

A well-optimized AI Inference Strategy focuses on reducing response time through techniques such as model compression, caching, batching, and hardware acceleration. Edge computing is also becoming an important part of modern AI Inference Strategy, as it brings computation closer to data sources and reduces network delays.

Without a performance-focused AI Inference Strategy, large-scale AI systems struggle to maintain consistency and reliability under heavy workloads.

Cost Implications of AI Inference Strategy at Scale

Cost efficiency becomes increasingly important as AI systems grow. A poorly managed AI Inference Strategy can lead to exponential increases in cloud computing expenses.

In cloud environments, scaling inference workloads without proper optimization can result in unnecessary resource consumption. This makes AI Inference Strategy a key factor in controlling operational costs.

On-prem infrastructure, while offering more predictable costs, can also become expensive if the AI Inference Strategy does not efficiently utilize hardware resources. Idle compute capacity and poor workload distribution increase overall expenses.

A balanced AI Inference Strategy helps organizations optimize cost per inference while maintaining performance standards.

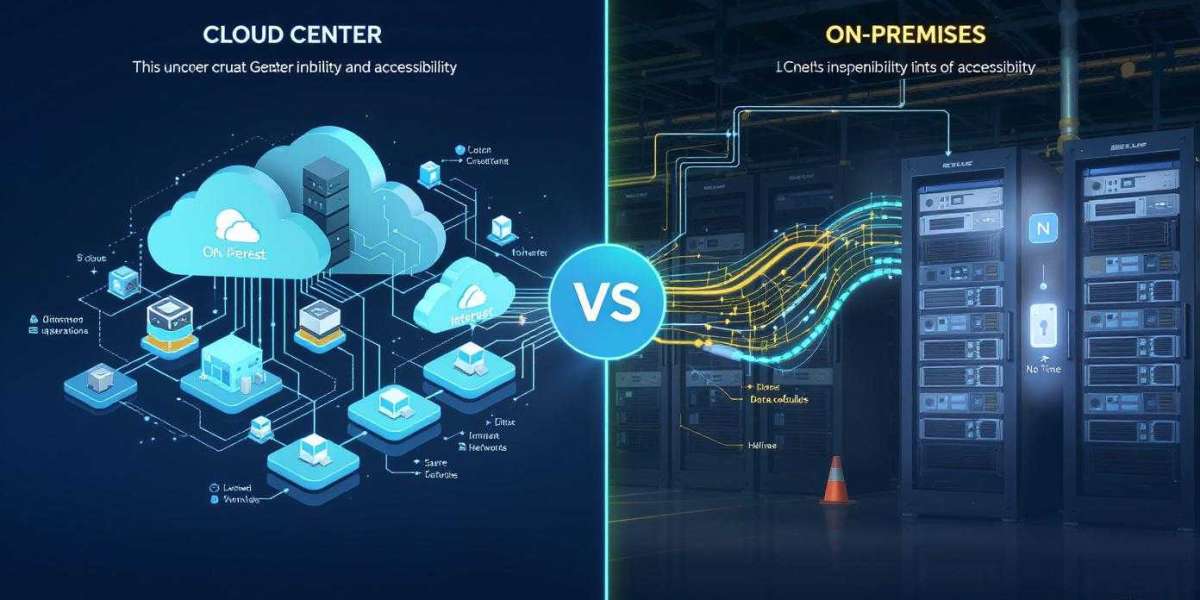

Cloud vs On-Prem Tradeoffs in Scalable AI Inference Strategy

Choosing between cloud and on-prem infrastructure is a critical decision in designing an AI Inference Strategy at scale. Cloud-based systems offer elasticity, allowing enterprises to scale up or down based on demand. This makes cloud environments ideal for dynamic workloads.

On-prem systems, on the other hand, offer better control, lower latency, and improved data security. For high-performance applications, an on-prem AI Inference Strategy may be more suitable.

Many enterprises adopt a hybrid AI Inference Strategy that combines both models. This allows them to optimize cost, performance, and compliance requirements simultaneously.

Latency Optimization in AI Inference Strategy

Latency is one of the most important performance metrics in any AI Inference Strategy. At scale, even small delays can significantly impact user experience.

A high-performance AI Inference Strategy minimizes latency through optimized model architectures, efficient data pipelines, and distributed computing techniques. Edge inference also plays a key role in reducing round-trip delays.

For real-time applications such as fraud detection, recommendation systems, and autonomous systems, latency-optimized AI Inference Strategy is essential for maintaining accuracy and responsiveness.

Infrastructure Efficiency and Resource Allocation

Efficient resource allocation is a core principle of scalable AI Inference Strategy. As workloads grow, systems must intelligently distribute compute resources to avoid bottlenecks.

A strong AI Inference Strategy ensures that GPU, CPU, and memory resources are utilized effectively. It prevents over-provisioning and underutilization, both of which negatively impact performance and cost.

Automation tools and orchestration platforms are increasingly being integrated into AI Inference Strategy to improve infrastructure efficiency at scale.

Reliability and System Stability

At enterprise scale, system reliability becomes a key concern in AI Inference Strategy. Any downtime or inconsistency can lead to significant business disruption.

A reliable AI Inference Strategy incorporates failover mechanisms, load balancing, and redundancy planning. These ensure continuous service availability even under heavy load or partial system failures.

Without a reliability-focused AI Inference Strategy, large-scale AI systems become vulnerable to instability and operational risks.

Security Considerations in Scalable AI Inference Strategy

Security remains a top priority in any AI Inference Strategy, especially at scale. As data volumes increase, so does the risk of exposure and breaches.

A secure AI Inference Strategy includes encryption, access control, and secure model deployment pipelines. It also ensures compliance with industry regulations and data protection standards.

At scale, security must be embedded into every layer of the AI Inference Strategy to protect sensitive information and maintain trust.

Real World Enterprise Impact

In real-world applications, AI Inference Strategy plays a critical role in shaping user experiences and business outcomes. In e-commerce, it powers recommendation engines that handle millions of daily interactions. In finance, it supports fraud detection systems that must respond instantly. In healthcare, it enables diagnostic tools that assist medical professionals in real time.

Each of these applications relies on a highly optimized AI Inference Strategy to function effectively at scale. Without it, system performance and reliability would degrade significantly.

Important Information for Enterprise Scaling

As AI adoption continues to expand, organizations must treat AI Inference Strategy as a core architectural decision rather than a secondary technical choice. Scaling AI successfully requires continuous optimization, monitoring, and adaptation of inference systems.

Enterprises that invest in a strong AI Inference Strategy early in their scaling journey are better positioned to achieve sustainable performance, cost efficiency, and operational resilience. In the long run, AI success is defined not just by model accuracy but by how effectively the AI Inference Strategy supports real-world execution at scale.

At BusinessInfoPro, we equip entrepreneurs, small business owners, and professionals with practical insights, proven strategies, and essential tools to drive growth. By breaking down complex concepts in business, marketing, and operations, we transform challenges into clear opportunities, helping you confidently navigate today’s fast-paced market. Your success is at the heart of what we do because as you thrive, so do we.